EASE, the Engine for AI-based Skill Evaluation was built around a simple yet uncomfortable truth: for years, enterprises have been measuring learning, not readiness.

Enterprise skilling followed a reassuring structure. People went through training programs, completed assessments, earned certifications, and organizations moved forward with the quiet hope that learning would somehow translate into performance. On dashboards, progress looked steady. On paper, teams looked ready.

But delivery told a different story.

Projects started slipping. Senior engineers stepped in more often than expected. Professionals who were officially “certified” hesitated when systems failed in production. Learning leaders began hearing the same feedback from different teams, in different forms: people were passing assessments, but they weren’t ready for real work.

This wasn’t a failure of effort. Enterprises were investing heavily in upskilling. It wasn’t a failure of talent either. The people were capable, curious, and willing to learn.

What failed-slowly and quietly- was the way readiness was being understood.

Traditional assessments were good at measuring recall and familiarity. They showed how much someone remembered, how quickly they responded, how well they navigated known patterns. But real work doesn’t arrive as a set of familiar questions. It demands judgment, sequencing, recovery, and the ability to operate when things don’t go as planned.

As technology stacks grew more complex and AI-driven systems became part of everyday workflows, the cost of uncertainty increased. A misstep was no longer just a learning gap; it became a delivery risk. Teams needed people who could respond, adapt, and recover in real conditions- not just those who could score well in controlled ones.

Slowly, an uncomfortable realization set in. Enterprises had become very good at tracking progress, but very poor at validating capability. They could see who had completed training. They couldn’t always see who was truly ready.

That shift changed the conversation entirely. Skill validation was no longer about adding another assessment or raising the difficulty of a test. It became about proof;proof that learning could hold up under real conditions, and that confidence was earned through doing, not assumed through scores.

This is the gap EASE was designed to address.

This is exactly the gap Nuvepro set out to solve. And at the center of that shift sits EASE – the Engine for AI-based Skill Valuation.

By grounding evaluation in real environments and observable performance, readiness stops being a hopeful assumption and becomes something tangible, something you can see, measure, and trust when the stakes are real.

The uncomfortable truth about traditional assessments

If you had to decide whether someone is truly project-ready, what would you trust more?

A multiple-choice test, manually evaluated, ending in a final score?

Or a real project scenario, executed in a live environment, observed and evaluated continuously?

Most people already know the answer. And yet, for years, enterprises have relied on the former.

Traditional assessments were built for convenience, not reality. They were easier to administer, easier to standardize, and easier to score. But over time, their limitations became harder to ignore.

Learners worked inside browser-based sandboxes with restricted tools and configurations. Deployments were simulated, not real. Integration points were skipped. Problems were broken down into isolated tasks instead of end-to-end workflows. Assessments focused on a single technology stack, even though real projects rarely do. Theory was rewarded more than application. And everyone, regardless of role, experience, or context was measured the same way.

At the end of it all, enterprises received just one thing. A score.

What that score didn’t provide was context. It didn’t show how someone approached a problem, how they handled failure, how they debugged under pressure, or how they made decisions when there was no obvious right answer. It didn’t inspire confidence, only assumption.

And that’s where the gap became impossible to overlook.

A number cannot tell you if someone can operate in a client environment. It cannot reveal whether they can adapt when requirements change, systems fail, or constraints tighten. It can confirm exposure, but not readiness.

This is where Nuvepro’s Skill Validation Assessments, powered by EASE, take a fundamentally different approach.

Instead of asking learners to choose answers, they are asked to perform. Instead of simulated tasks, they work in environments that resemble real projects. Instead of just scores, evaluation happens continuously, capturing how skills are applied.

The result isn’t just better assessment.

It’s clarity. Clarity on Skills. Clarity on readiness. And clarity enterprises can rely on when real work begins.

Why finishing a course doesn’t automatically mean someone is ready

For years, assessments have been treated like checkpoints. You learn something, you take a test, you get a score, and the system moves on. It’s tidy, measurable, and easy to report. But it rarely answers the question that actually matters once learning ends.

At Nuvepro, this kept surfacing in enterprise conversations. Teams were trained. Courses were completed. Assessments were passed. And yet, when it was time to put people on real projects, there was hesitation.

Because passing an assessment is not the same as being ready to perform.

Real work doesn’t come with clear instructions or single correct answers. It involves uncertainty, trade-offs, broken setups, and decisions that have real consequences. That’s why Nuvepro treats assessments not as checkpoints, but as proof points- evidence that a skill can stand up in real project conditions.

Our skill validation assessments are designed to answer one clear question:

Is this individual or team ready to perform in a real project environment?

What real work actually looks like (and why assessments should reflect that)

In real projects, professionals don’t solve problems in isolation. They design solutions, configure environments, integrate tools, debug failures, and rethink decisions when something doesn’t work. That process is messy, iterative, and deeply contextual.

So instead of testing knowledge in fragments, Nuvepro built project-based assessments that look and behave like real work. These assessments are aligned to specific skill clusters and designed around industry workflows. They replicate real project problems and unfold the way actual client engagements do.

Learners aren’t judged on how fast they finish or how neatly they answer. They are evaluated on how they think, how they execute, and how they respond when things don’t go as planned. In short, they work the way professionals work on real projects.

Why roles can’t be validated with a one-size-fits-all approach

Another mistake traditional assessments make is treating all roles the same. A cloud engineer, a data engineer, and a full-stack developer operate under completely different expectations. Their decisions, tools, and responsibilities are not interchangeable.

That’s why Nuvepro uses role-based assessments alongside project-based ones. These assessments are built to mirror role-specific responsibilities. They measure end-to-end execution rather than isolated tasks and validate readiness for that exact role.

Together, project-based and role-based assessments shift validation from theoretical correctness to practical competence. They stop asking, “Does this person know the concept?” and start asking, “Can this person actually do the job?”

When assessments start looking like real work, evaluation gets harder

Designing realistic assessments is only half the problem.

Once learners are solving complex, open-ended problems, evaluation becomes significantly more difficult. There is no single correct path. Two learners might reach the same outcome in completely different ways. One might demonstrate deep understanding. Another might arrive there by accident.

Manual evaluation struggles here. It’s slow, subjective, and inconsistent, especially at scale. And focusing only on final outputs misses the most important part: how the work was done.

This is exactly why Nuvepro built EASE.

EASE: The intelligence that makes outcome-driven validation possible

EASE (Engine for AI-Based Skill Evaluation) is the core engine behind Nuvepro’s assessments. It’s not an add-on or an afterthought. It’s what makes real-world validation possible at scale.

EASE doesn’t just look at final answers. It analyzes learner actions throughout the assessment. It evaluates execution quality across environments, measures the depth of skill application, and normalizes evaluation across large learner populations.

That distinction is what fundamentally changes how skills are validated.

How AI makes skill validation more accurate and more fair

Traditional assessments struggle with subjectivity and scale. EASE addresses both by applying AI across multiple dimensions.

It evaluates submissions in context, not against a single expected output. This allows it to assess approach, efficiency, correct tool usage, and logical decision-making. It also analyzes behaviour- how learners arrive at solutions, how they handle errors, and whether they attempt optimizations.

This mirrors how performance is judged in real projects.

At the same time, EASE ensures consistency. Manual evaluation varies from reviewer to reviewer. AI-driven normalization ensures reduced bias and standardized scoring across cohorts, teams, and geographies. A skill validated in one group me-ns the same thing in another.

The focus remains on outcomes achieved, not just tasks completed- making validation meaningful for real deployment decisions.

Turning raw scores into readiness signals enterprises can trust

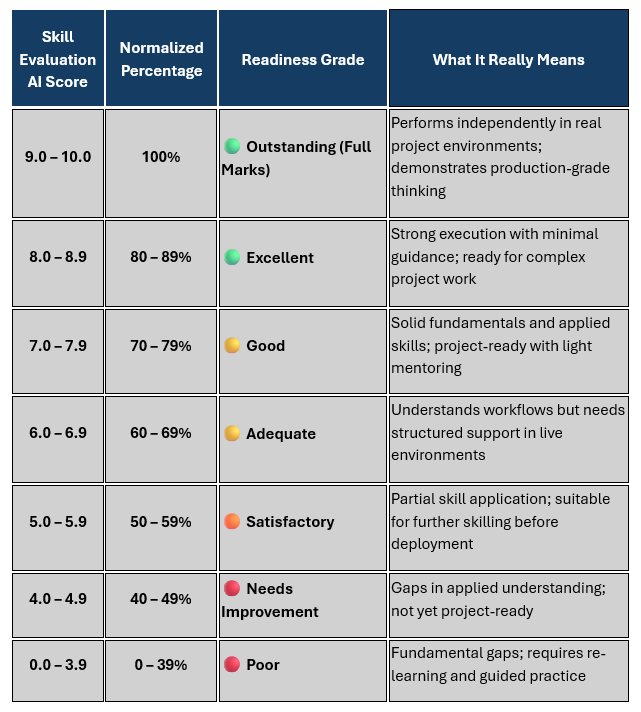

To keep results transparent and genuinely useful, EASE (Engine for AI-based Skill Evaluation) follows a clear and consistent way of scoring and normalizing every assessment. Each submission is first evaluated on a 0–10 scale. But that number isn’t the final output. It’s normalized into a percentage and then translated into a grade that reflects real readiness, not just performance in a test environment.

These grades aren’t cosmetic labels added for reporting. They indicate how ready a learner actually is to apply the skill in real work scenarios. This makes it easier for enterprises to understand skill depth, compare results across different assessments, and clearly see where deployment makes sense and where support or intervention is still needed. For L&D teams and managers, assessment data finally becomes practical ,something they can act on, not just store.

What enterprises finally get when skills are truly validated

When skill validation is powered by EASE, enterprises stop relying on surface-level metrics. The conversation shifts away from how much learning activity happened and toward what people can genuinely do.

Instead of tracking course completion numbers, leaders can clearly see who is project-ready, which teams can be deployed with confidence, and where real skill gaps could affect delivery. This clarity helps organizations onboard faster, staff projects more confidently, reduce risk during execution, and deliver outcomes more consistently.

Skills are no longer assumed based on attendance or completion. They are verified through performance, validated through consistency, and trusted across the organization.

The quiet advantage that changes enterprise skilling

EASE (Engine for AI-based Skill Evaluation) doesn’t try to be loud or flashy. It works quietly in the background of every Nuvepro’s skill validation assessment, doing the hard work of evaluating, normalizing, validating, and proving capability.

By shifting focus from learning activity to learning impact, EASE helps enterprises move from raw scores to skill intelligence, from completion tracking to readiness validation, and from guesswork to evidence-based decisions.

That’s why Nuvepro’s assessments don’t just test skills. They establish trust in them.

And in today’s enterprise landscape, trust in skills isn’t just important - it’s a real competitive advantage.

Ready to see what trusted skill validation looks like?

If you’re still relying on course completions and raw scores to make critical talent decisions, it’s time to see what changes when skills are actually evaluated, validated, and trusted.

Nuvepro’s EASE is the engine that powers AI-based skill evaluation across Nuvepro assessments, quietly analyzing performance, normalizing outcomes, and translating results into clear readiness signals enterprises can act on.

Whether you’re onboarding new hires, preparing teams for deployment, or reducing risk in project staffing, EASE helps you move from assumptions to evidence.

Try Nuvepro EASE (Engine for AI-based Skill Evaluation) and experience what it means to make decisions based on proven capability